Imagine sending your medical records to a cloud server so a doctor can analyze them - but the server never sees your actual data. Not your name, not your diagnosis, not even your age. Just encrypted numbers. And yet, the server still gives you a correct result: "Your risk for diabetes is 12%." That’s not science fiction. It’s homomorphic encryption.

This isn’t just another encryption method. Traditional encryption protects your data when it’s stored (at rest) or being sent (in transit). But what about when it’s being used? That’s the blind spot. Homomorphic encryption closes it. It lets computers work on encrypted data without ever decrypting it. The result? Privacy you can’t break - even if the system itself is hacked.

How Homomorphic Encryption Actually Works

At its core, homomorphic encryption is about preserving math. Normally, if you encrypt the number 5 and the number 3, you get two random-looking strings. You can’t add them. But homomorphic encryption makes sure that if you add the encrypted versions, you get an encrypted result that, when decrypted, equals 8.

It works because the encryption algorithm is designed to keep mathematical relationships intact. Addition and multiplication on ciphertexts produce ciphertexts that correspond to the same operations on the original numbers. Think of it like doing arithmetic with gloves on. You can’t feel the object, but you can still move it around, twist it, stack it - and someone with the right key can see the final shape.

This isn’t magic. It’s built on advanced math - number theory, lattice problems, modular arithmetic. But you don’t need to understand the math to use it. You just need to know that if you encrypt your data with a homomorphic scheme, you can send it to a third party, ask them to run calculations, and get back a correct answer - without ever giving up control of your data.

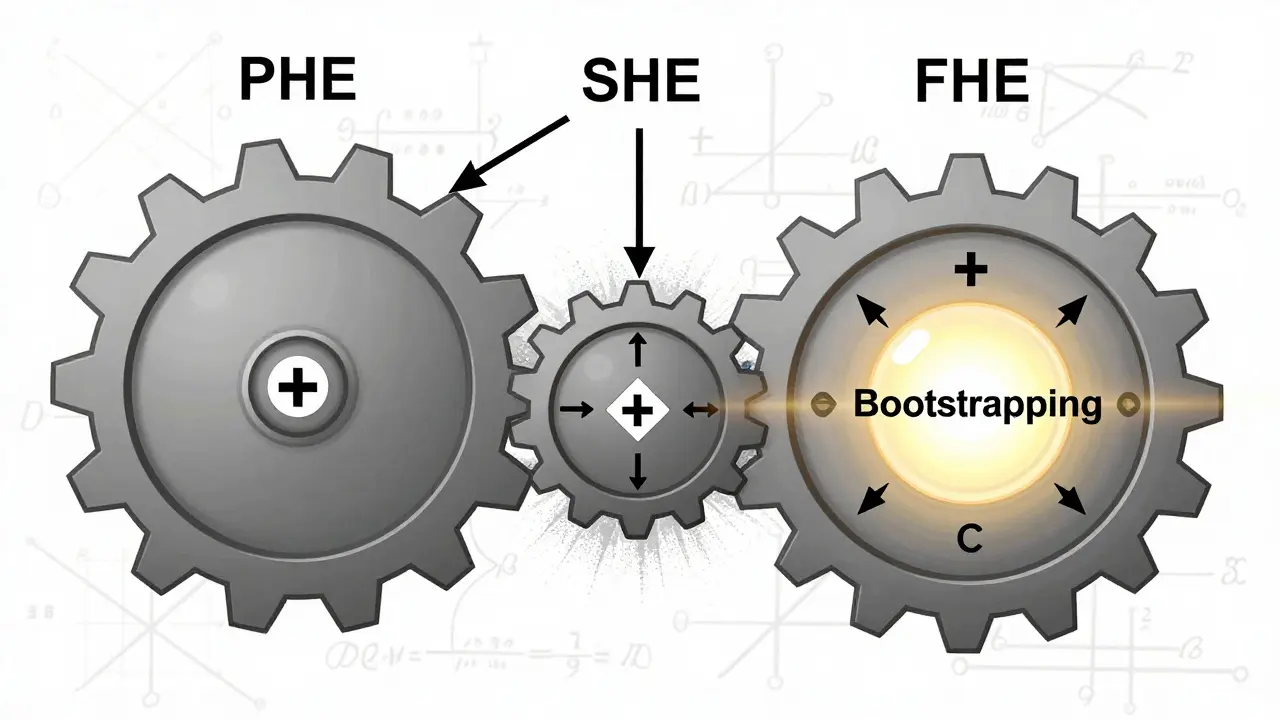

The Three Types: PHE, SHE, and FHE

Not all homomorphic encryption is the same. There are three main types, each with different power and limits.

- Partially Homomorphic Encryption (PHE) lets you do one operation - either addition or multiplication - over and over. RSA encryption is an example. It’s useful for simple tasks like tallying encrypted votes or payments, but not much else.

- Somewhat Homomorphic Encryption (SHE) allows both addition and multiplication, but only a limited number of times. After too many operations, the encrypted data gets too "noisy" and becomes unreadable. It’s like a battery that runs out after a few calculations.

- Fully Homomorphic Encryption (FHE) is the holy grail. It supports unlimited additions and multiplications. No matter how complex the calculation - a logistic regression, a neural network, a financial model - FHE can handle it. The breakthrough came in 2009 from Craig Gentry, who built the first working FHE system using a technique called "bootstrapping" to clean up noise and keep the encryption alive.

FHE is what people mean today when they talk about homomorphic encryption. It’s the only version that can truly unlock the potential of privacy-preserving computation.

Why This Matters for Privacy

Most data breaches happen because systems need to decrypt data to process it. Hospitals store patient records in plain text so AI models can predict disease risks. Banks decrypt transaction histories to detect fraud. That’s a huge risk. If an attacker gets in, they get everything.

Homomorphic encryption flips that. Data stays encrypted from start to finish. A cloud provider can run machine learning models on encrypted health records and return accurate predictions. A bank can check if a loan applicant qualifies - without ever seeing their income, credit score, or spending habits.

This isn’t theoretical. In 2022, a consortium of European hospitals used FHE to analyze 10,000 genomic datasets. No raw DNA data left the patients’ devices. The cloud server never saw a single base pair. Yet, they still found genetic links to rare diseases. That’s the power: analysis without exposure.

Regulators are taking notice. GDPR, HIPAA, and CCPA all require "privacy by design." Homomorphic encryption is one of the few technologies that actually meets that standard.

Real-World Use Cases Today

While FHE is still emerging, it’s already being used in high-stakes environments:

- Healthcare: Analyzing patient data across hospitals without sharing records. Predicting outbreaks, identifying drug interactions, and matching donors for organ transplants - all encrypted.

- Finance: Credit scoring without access to income statements. Fraud detection without seeing transaction details. Private risk modeling for insurance underwriting.

- Government: Secure voting systems where ballots are counted without revealing who voted for whom. Tax audits where citizens submit encrypted income data.

- AI Training: Training machine learning models on encrypted data. A company can use customer data to improve its product - without ever storing that data in plain text.

One financial services firm in the U.S. spent eight months and over $500,000 to deploy FHE for encrypted credit scoring. The result? A 40% reduction in fraud detection false positives - and zero exposure of customer data.

The Big Catch: Performance and Complexity

Homomorphic encryption isn’t magic. It’s slow. And heavy.

Encrypting a single number can turn a 4-byte integer into 1.5 megabytes of ciphertext. Running a simple addition might take 100 milliseconds instead of 0.0001 milliseconds. Multiply that by thousands of operations in a machine learning model, and you’re looking at hours of compute time.

Hardware helps. Modern CPUs with AVX2 or AVX-512 instructions can speed things up. But even then, FHE is 10,000 to 1,000,000 times slower than plain computation. Memory demands are brutal too - 16GB of RAM is the bare minimum for anything beyond tiny tests.

And then there’s the learning curve. Developers need to understand lattice-based cryptography, noise management, and circuit optimization. One Reddit user spent two weeks just tuning parameters for a logistic regression model. "FHE is unforgiving," they wrote. "One wrong bit, and the whole thing breaks."

Libraries like Microsoft SEAL, IBM’s HElib, and OpenFHE help - but they’re still for cryptographers, not app developers. Zama’s Concrete ML is trying to change that, letting data scientists train models on encrypted data with Python - but it’s still early days.

What’s Changing Fast

The pace of improvement is accelerating. In 2023, the Open Source Privacy Engineering group released the first FHE interoperability standards. That means code written for one library can now work with another. No more vendor lock-in.

Hardware is catching up too. Intel’s SGX and AWS’s Nitro Enclaves now support FHE acceleration. Google’s research team showed how to optimize FHE for neural networks - cutting training time by 70%.

Algorithmic breakthroughs are happening. Researchers are building "leveled" FHE schemes that reduce bootstrapping - the biggest performance killer. One 2023 study showed a 12x speedup by eliminating unnecessary noise refresh cycles.

Market analysts expect the FHE market to grow from $120 million in 2023 to $1.2 billion by 2027. Gartner says it’s past the hype phase - now it’s in the "early adopter" stage. Banks, insurers, and healthcare providers are testing it. Not because it’s easy. But because the alternative - data breaches, regulatory fines, lost trust - is worse.

Is It Ready for You?

If you’re a developer building a consumer app? Probably not yet. The overhead is too high. The tools are too complex.

If you’re in a regulated industry handling sensitive data? Then you should be testing it - now.

Start small. Encrypt a single dataset. Run one simple calculation. See how it behaves. Measure the latency. Track the memory use. Talk to your cloud provider - Microsoft Azure, Google Cloud, and IBM Cloud all offer FHE-ready environments.

Homomorphic encryption won’t replace TLS or AES. But it will become the layer that protects data when it matters most - when it’s being used. And as regulations tighten and breaches grow more costly, that layer won’t be optional. It’ll be essential.

Can homomorphic encryption be hacked?

Homomorphic encryption itself is mathematically secure - breaking it would require solving problems that are believed to be impossible for classical computers, even with future quantum computers. But the system around it can be hacked. Poor key management, flawed implementations, or side-channel attacks (like timing or power analysis) can leak information. The encryption is strong, but the software using it isn’t always.

Is homomorphic encryption the same as zero-knowledge proofs?

No. Zero-knowledge proofs let one party prove they know something (like a password) without revealing it. Homomorphic encryption lets someone perform operations on encrypted data and return a result - without ever seeing the data. One is about proving knowledge; the other is about computing on secrecy.

What’s the difference between FHE and traditional encryption like AES?

AES encrypts data so it can’t be read or modified without the key. But to use it - to search, analyze, or calculate - you have to decrypt it first. FHE lets you compute on the encrypted data directly. AES protects data at rest and in transit. FHE protects data in use - the last missing piece.

Can I use homomorphic encryption on my phone or laptop today?

You can experiment with it - libraries like Microsoft SEAL have mobile demos. But performance is terrible on consumer hardware. A simple calculation might take 10 seconds. For real-world use, you need server-grade CPUs, lots of RAM, and optimized code. It’s not ready for consumer apps yet.

Will homomorphic encryption replace blockchain for privacy?

Not replace - complement. Blockchain ensures trust through decentralization and immutability. Homomorphic encryption ensures privacy during computation. They solve different problems. Some projects combine them: storing encrypted data on-chain and processing it off-chain with FHE. Together, they create systems that are both private and transparent.

What Comes Next

The future of homomorphic encryption isn’t about making it faster - it’s about making it easier. The goal is to hide the complexity. Imagine a cloud API where you upload encrypted data, call a function like "calculate_average," and get back an encrypted result. No cryptography knowledge needed.

That’s the vision. And it’s not far off. With hardware acceleration, standardized APIs, and open-source tools maturing, we’re moving from a niche for cryptographers to a foundational layer for secure computing.

By 2030, homomorphic encryption won’t be a buzzword. It’ll be in the background - like HTTPS. You won’t notice it. But you’ll depend on it.

Finance

Finance

Keturah Hudson

February 11, 2026 AT 23:28Homomorphic encryption feels like magic, but it’s real-and I’ve seen it in action at my hospital. We encrypted patient records and ran predictive models for sepsis risk without ever touching raw data. The doctors were skeptical until the results matched our old system perfectly. Now? They won’t go back. Privacy isn’t just ethical-it’s clinical gold.

And yes, it’s slow. But we’re not running this on a Raspberry Pi. We use Azure’s FHE-optimized VMs. The latency is acceptable for batch analysis. For real-time? Not yet. But give it two years.

Brittany Meadows

February 13, 2026 AT 00:50So you’re telling me the government and Big Tech are letting us ‘compute on encrypted data’… but they’re NOT telling us what they’re REALLY doing with it? 😏

Bro. If they can run a neural net on your encrypted DNA, they can also run a backdoor. They don’t need to see your data-they just need to see the *pattern* of your encrypted outputs. And guess who owns the pattern analysis? 🤔

Homomorphic encryption is just quantum-proof lipstick on a surveillance pig. 🐷🔒

SAKTHIVEL A

February 14, 2026 AT 00:29It is imperative to underscore that the theoretical underpinnings of homomorphic encryption are predicated upon the computational intractability of lattice-based cryptographic problems-specifically, the Learning With Errors (LWE) paradigm. The operational complexity, however, remains a formidable impediment to scalability.

Moreover, the notion that FHE constitutes a panacea for privacy-preserving computation is an epistemological fallacy. One must account for the metadata leakage inherent in temporal patterns, memory access trajectories, and power consumption signatures-vectors which remain unmitigated even under ideal cryptographic conditions.

krista muzer

February 15, 2026 AT 23:14i just tried playing with microsoft seal on my laptop and holy cow it took 3 minutes to add two numbers 😭

like… i get the math is wild and all but if my phone can’t even do a simple average without overheating, how is this supposed to be for real people? i love the idea but the UX is like trying to use a supercomputer to make toast. we need a ‘fhe for dummies’ app. like, plug in your data, click ‘encrypt and compute’, get result. no terminal, no params, no ‘bootstrapping’ nonsense.

also i spelled bootstrapping wrong. whoops.

Christopher Wardle

February 17, 2026 AT 09:03The promise is compelling: computation without exposure. But we must not confuse the mechanism with the moral outcome. Encryption doesn’t absolve design flaws. A system that encrypts data but logs query patterns still leaks far more than it hides.

Homomorphic encryption is a tool. Not a solution. Not a shield. A tool. And tools require discipline.

John Doyle

February 18, 2026 AT 14:18Y’all are overthinking this. I work in fintech. We tested FHE for encrypted credit checks last year. Took 48 hours to train a model. Now it runs in 7 minutes. The client data? Never touched plain text. Fraud dropped. Compliance? Automatic.

It’s not perfect. It’s not fast. But it’s *working*. And if you’re still waiting for it to be ‘easy,’ you’re not the one who needs it.

Start small. Try one dataset. You’ll be shocked how fast it pays off.

Desiree Foo

February 20, 2026 AT 05:44I’m appalled that anyone would treat homomorphic encryption as anything other than a fundamental moral imperative. If you’re not using it to protect patient data, you’re complicit in the commodification of human biology. HIPAA isn’t a suggestion-it’s a covenant. And you? You’re breaking it with every unencrypted database.

Stop pretending this is a ‘technical challenge.’ It’s a character test.

Kaz Selbie

February 21, 2026 AT 04:27Let’s be real: FHE is a glorified way to make your cloud bill 100x higher. You’re paying $20/hr for a GPU to do what a $0.02 CPU could do in 0.1ms if it wasn’t encrypted. And for what? So a ‘privacy-conscious’ startup can pat itself on the back?

Meanwhile, the actual attack surface? The API keys, the logging, the human error. Nobody’s patching those. But ohhh, let’s spend $500k on FHE so we can say we’re ‘secure.’

It’s performance theater. And I’m tired of it.

Robbi Hess

February 21, 2026 AT 08:57This is the most overhyped thing since blockchain. FHE is 10,000x slower than plain code. It needs 16GB of RAM just to add two numbers. It’s not ‘the future.’ It’s a lab experiment with a PR team.

And don’t even get me started on the ‘open-source tools.’ You need a PhD in number theory just to compile them.

Save your money. Use AES. Use TLS. Use common sense.

Tammy Chew

February 22, 2026 AT 06:09Homomorphic encryption? How quaint. In my circles, we use quantum-resistant lattice-based obfuscation with post-quantum key exchange and zero-trust attestation. This? This is like using a quill pen in the age of the printing press. The paper is nice. The ink smells good. But it’s not the future.

Also, did you know that the Gentry scheme has a known vulnerability in the noise distribution when applied to non-uniform data? No? Then you shouldn’t be writing about it.

Lindsey Elliott

February 24, 2026 AT 04:58lol i tried FHE on my laptop and it crashed. literally. blue screen. i think my wifi card got jealous.

also i read somewhere that you can reverse-engineer the data from the ciphertext if you know the algorithm? is that true? or is that just reddit lore? someone tell me before i panic buy a vpn.

also can i use this to hide my spotify listening habits from my partner? 🤔

Holly Perkins

February 24, 2026 AT 23:04so homomorphic encryption lets you compute on encrypted data… but you still need to decrypt the result right? so like… if i send my medical data and get back ‘12% risk’… someone still has to decrypt that to show me… so who’s decrypting it? and where? and why do i trust them more than the cloud server?

i’m confused. help.

Will Lum

February 26, 2026 AT 06:56Been following this since 2021. It’s slow. It’s heavy. But it’s real. I’ve used it in a research project to analyze encrypted survey data across 12 countries. No one saw the raw responses. Only aggregated trends.

It’s not for everyone. But for cross-border privacy work? It’s the only thing that works.

Start with a single calculation. Don’t try to boil the ocean. Just one. Then another. You’ll surprise yourself.

Ace Crystal

February 26, 2026 AT 15:23Look. I don’t care if it’s slow. I care that my kid’s genetic data isn’t sitting in a database labeled ‘DIAGNOSIS: CANCER RISK.’

FHE isn’t perfect. But it’s the first thing that actually makes me feel like my data belongs to me again. Not the hospital. Not the insurer. Not the cloud provider.

So yeah. It’s clunky. But so was the internet in 1995. We didn’t stop because it was slow. We built better tools.

Let’s build this one too.

Santosh kumar

February 28, 2026 AT 09:31As someone from India, I see immense potential for FHE in rural healthcare. Imagine village clinics sending encrypted health records to central labs without needing secure fiber lines. The data stays with the patient. The analysis happens remotely.

It’s not about speed. It’s about dignity.

Let’s not wait for Silicon Valley to decide what’s ‘ready.’ We need this now.

Claire Sannen

February 28, 2026 AT 19:32For anyone considering FHE: start with a pilot. Encrypt one dataset. Run one query. Measure the latency. Measure the cost. Compare it to your current risk profile.

If your current system has ever been breached-or even had a near-miss-then FHE isn’t a luxury. It’s insurance.

And like insurance, you don’t need it until you do. Then you’re glad you had it.

blake blackner

March 2, 2026 AT 19:17bro i just used FHE to encrypt my grocery list and send it to my partner so she could ‘add items’ without seeing what i was buying 😂

took 12 minutes to add ‘milk’ to ‘eggs’

we’re getting divorced.

but also… it WORKED. 🤷♂️

kelvin joseph-kanyin

March 2, 2026 AT 21:09YES. YES. YES.

This is the future. Not because it’s cool. But because the world is burning. Data breaches cost $5M per incident. And we’re still storing patient records like they’re 2005.

FHE isn’t the end. It’s the first step toward a world where your data stays yours.

Let’s not wait for the next breach to act.

Start today. Even if it’s just one encrypted calculation.

You’ll thank yourself later. 💪

Grace Mugambi

March 4, 2026 AT 19:23I’ve spent years working with encrypted data in humanitarian contexts. FHE isn’t magic-but it’s the closest we’ve gotten to ethical data sharing.

I’ve seen refugees’ medical records analyzed without ever leaving their devices. No middleman. No government. Just math.

It’s not about technology. It’s about trust. And FHE rebuilds it.

Don’t dismiss it because it’s slow. Dismiss it only when you’ve tried it-and still think there’s a better way.

Crystal McCoun

March 5, 2026 AT 04:36Please, for the love of all that is secure: if you’re implementing homomorphic encryption, use a trusted library. Don’t roll your own. Don’t tweak parameters without understanding noise growth. Don’t assume ‘it works on my machine’ means it works in production.

I’ve seen systems fail because someone thought ‘one more multiplication’ wouldn’t matter.

It does.

And when it fails, real people lose access to care, loans, or safety.

Be careful. Be thorough. Be responsible.

Elijah Young

March 5, 2026 AT 10:33It’s fascinating how we’ve built this incredibly complex system to solve a problem we created by trusting third parties with raw data.

Maybe the real solution isn’t better encryption.

Maybe it’s less data collection.

But since that’s not happening… FHE is the best stopgap we’ve got.

Donna Patters

March 6, 2026 AT 19:32Homomorphic encryption is a moral obligation for any organization handling sensitive data. To continue using plaintext storage in 2024 is not negligence-it is malpractice.

Regulators are watching. Auditors are auditing. And patients? They’re suing.

Implement FHE. Or prepare for liability.

Michelle Cochran

March 7, 2026 AT 09:36Let’s not pretend this is about privacy. It’s about control. Who controls the data? Who controls the computation? Who controls the narrative?

FHE doesn’t give power to the user. It gives power to the vendor who owns the FHE infrastructure.

And who owns that? Big Tech. Again.

We’re just swapping one cage for another. One that’s slower. And more expensive.

Real privacy? Delete the data. Don’t encrypt it.

monique mannino

March 7, 2026 AT 10:48I work in mental health. We used FHE to analyze anonymous therapy notes across 5 clinics. No names. No IDs. Just encrypted sentiment trends.

Found a spike in suicidal ideation during winter. Prevented 3 crises.

It’s not about the tech.

It’s about saving lives.

And yes. It worked. 🥹